Get lucky

William Perkin did 150 years ago, when he discovered the first aniline dye. (Luck had little to do, however, with the commercial success that he had from it.) In an article in Chemistry World I explore this and other serendipitous discoveries in chemistry. Perkin’s wonderful dye is shown in all its glory on the magazine’s cover, and here is the article and the leader that I wrote for Nature to celebrate the anniversary:

*************

Perkin, the mauve maker

150 years ago this week, a teenager experimenting in his makeshift home laboratory made a discovery that can be said without exaggeration to have launched the modern chemicals industry. William Perkin was an 18-year-old student of August Wilhelm Hofmann at the Royal College of Chemistry in London, where he worked on the chemical synthesis of natural products. In one of the classic cases of serendipity for which chemistry is renowned, the young Perkin chanced upon his famous ‘aniline mauve’ dye while attempting to synthesize something else entirely: quinine, the only known cure for malaria.

As a student of Justus von Liebig, Hofmann made a name for himself by showing that the basic compound called aniline that could be obtained from coal tar was the same as that which could be distilled from raw indigo. Coal tar was the residue of gas production, and the interest in finding uses for this substance led to the discovery of many other aromatic compounds. At his parents’ home in Shadwell, east London, Perkin tried to make quinine from an aniline derivative by oxidation, based only on the similarity of their chemical formulae (the molecular structures are quite different). The reaction produced only a reddish sludge; but when the inquisitive Perkin tried it with aniline instead, he got a black precipitate which dissolved in methylated spirits to give a purple solution. Textiles and dyeing being big business at that time, Perkin was astute enough to test the coloured compound on silk, which it dyed richly.

Boldly, Perkin resigned from the college that autumn and persuaded his father and brother to set up a small factory with him in Harrow to manufacture the dye, called mauve after the French for ‘mallow’. The Perkins and others (including Hofmann) soon discovered a whole rainbow of aniline dyes, and by the mid-1860s aniline dye companies already included the nascent giants of today’s chemicals industry.

(From Nature 440, p.429; 23 March 2006)

***************

A colourful past

The 150th anniversary of William Perkin’s synthesis of aniline mauve dye (see page 429) is more than just an excuse to retell a favourite story from chemistry’s history. It’s true enough that there is still plenty to delight at in that story – Perkin’s extraordinary youth and good fortune, the audacity of his gamble in setting up business to mass-produce the dye, and the chromatic riches that so quickly flowed from the unpromising black residue of coal gas production. As a study in entrepreneurship it could hardly be bettered, for all that Perkin himself was a rather shy and retiring man.

But perhaps the most telling aspect of the story is the relationship that it engendered between pure and applied science. The demand for new, brighter and more colourfast synthetic dyes, along with means of mordanting them to fabrics, stimulated manufacturing companies to set up their own research divisions, and cemented the growing interactions between industry and academia.

Traditionally, dye-making was a practical craft, a combination of trial-and-error experimentation and the rote repetition of time-honoured recipes. This is not to say that the more ‘scholarly’ sciences failed sometimes to benefit from such empiricism – an interest in colour production led Robert Boyle to propose colour-change acidity indicators, for instance. But the idea that chemicals production required real chemical expertise did not surface until the eighteenth century, when the complexities of mordanting and multi-colour fabric printing began to seem beyond the ken of recipe-followers.

That was when the Scottish chemist William Cullen announced that if the mason wants cement, the dyer a dye and the bleacher a bleach, “it is the chemical philosopher who must supply these.” Making inorganic pigments preoccupied some of the greatest chemists of the early nineteenth century, among them Nicolas-Louis Vauquelin and Louis-Jacques Thénard and Humphry Davy. Perkin’s mauve was, however, an organic compound, and thus, in the mid-nineteenth century, rather more mysterious than metal salts. While the drive to understand the molecular structure of carbon compounds during this time is typically presented now as a challenge for pure chemistry, it owed as much to the profits that might ensue if the molecular secrets of organic colour were unlocked.

August Hofmann, Perkin’s one-time mentor, articulated the ambition in 1863: “Chemistry may ultimately teach us systematically to build up colouring molecules, the particular tint of which we may predict with the same certainty with which we at present anticipate the boiling point.” Both the need to understand molecular structure and the demand for synthetic methods were sharpened by chemists’ attempts to synthesize alizarin (the natural colourant of madder) and indigo. When Carl Graebe and Carl Liebermann found a route to the former in 1868, they quickly sold the rights to the Badische dye company, soon to become BASF. One of those who found a better route in 1869 was Ferdinand Riese, who was already working for Hoechst. (Another was Perkin.) These and other dye companies, including Bayer, Ciba and Geigy, had already seen the value of having highly skilled chemists on their payroll – something that was even more evident when they began to branch into pharmaceuticals in the early twentieth century. Then, at least, there was no doubt that good business needs good scientists.

(From Nature 440, p.384; 23 March 2006)

Wednesday, May 31, 2006

Platinum sales

We all know that platinum is a precious metal, but paying close to $3 million for a few grams of it seems excessive. Yet that is what a private art collector has just done. The inflated value of the metal in this case stems from how it is arranged: as tiny black particles scattered over a sheet of gummed paper so as to portray an image of the moon rising over a pond on Long Island in 1904. This is, in other words, a photograph, defined in platinum rather than silver. It was taken by the American photographer Edward Steichen, and in February it sold at Sotheby’s of New York for $2,928,000 – a record-breaking figure for a photo.

At the same sale, a photo of Georgia O’Keeffe’s hands recorded in a palladium print by Alfred Stieglitz in 1918 went for nearly $1.5 million (the story is told by Mike Ware here). Evidently these platinum-group images have become collector’s items.

The platinotype process was developed (excuse the pun) in the nineteenth century to address some of the shortcomings of silver prints. In particular, while silver salts have the high photosensitivity needed to record an image ‘instantly’, the metal fades to brown over time because of its conversion to sulphide by reaction with atmospheric sulphur gases. That frustrated John Herschel, one of the early pioneers of photography, who confessed in 1839 that ‘I was on the point of abandoning the use of silver in the enquiry altogether and having recourse to Gold or Platina’.

Herschel did go on to create a kind of gold photography, called chrysotype. But it wasn’t until the 1870s that a reliable method for making platinum prints was devised. The technique was created by the Englishman William Willis, and it became the preferred method for high-quality photography for the next 30 years. A solution of iron oxalate and chloroplatinate was spread onto a sheet coated with gum arabic or starch and exposed to light through the photographic negative. As the platinum was photochemically reduced to the finely divided metal, the image appeared in a velvety black that, because of platinum’s inertness, did not fade or discolour. ‘The tones of the pictures thus produced are most excellent, and the latter possess a charm and brilliancy we have never seen in a silver print’, said the British Journal of Photography approvingly.

To enrich his moonlit scene, Steichen added a chromium salt to the medium, which is trapped in the gum as a greenish pigment, giving a tinted, painterly image reminiscent of the night scenes painted by James McNeill Whistler. Steichen and Stieglitz helped to secure the recognition of photography as a serious art form in the USA.

Stieglitz’s use of palladium rather than platinum in 1918 reflects the demise of the platinotype. The metal was used to catalyse the manufacture of high explosives in World War I, and so it could not be spared for so frivolous a purpose: making shells was more important than making art.

(This article will appear in the July issue of Nature Materials.)

Friday, May 26, 2006

Science, voodoo… or just ideology?

The past few weeks have been a time of turmoil for economic markets. They have been lurching and plunging all over the place, prompting a rash of ‘explanations’ from market analysts. ‘The noise in the markets is the sound of everyone and his dog coming up with post-facto justifications for the apparently random movements in assets from gold to equities, copper and the dollar’, says Tom Stevenson in his 23 May Investment Column in the Daily Telegraph. ‘And while the latest rationalisation sounds plausible enough when you’ve just heard it, so too does the next believable but contradictory explanation.’

Full marks to Stevenson for his honesty. But then he goes on to suggest that ‘what drives financial markets is not the ebb and flow of investment ratios and economic statistics but the fickle and often lemming-like workings of investors’ minds… In other words, asset prices are falling simply because they’ve been rising sharply and investors have become more nervous.’ Now, I am not a highly paid investments analyst, but I can’t help feeling that Stevenson is hardly dropping a bombshell here. Weather forecasters would be unlikely to gain many plaudits by telling us that ‘tomorrow it will rain because some water vapour has evaporated and then condensed up in the sky.’

I suppose we should be grateful that Stevenson is at least not recycling one of the many voodoo tales to which he alludes. But his comments are a symptom of the extraordinary state of economic punditry today – which in itself is a reflection of the bizarre state of economic theory itself. When Thomas Carlyle called it the ‘dismal science’, he was (contrary to popular belief) making a judgement not about its quality but about its seemingly gloomy message. But ‘dismal’ hardly does the situation justice today – there is no other ‘science’, hard or soft, that has got itself into a comparably strange and parlous state.

Everyone knows that market statistics, such as commodity values, fluctuate wildly over a wide range of timescales (while, in the long term, showing generally steady growth). There is nothing particularly remarkable or surprising about that: clearly, the economy is a complex system (one of the most complex we know of, in fact), and such systems, whether they be earthquakes or landslides or biological populations or electronic circuits, show pronounced and seemingly random noise. What is unusual about economic noise, however , is that an awful lot of money rides on it.

That is why, rather than regard it indeed as noise, economists and market analysts are desperate to ‘explain’ it. Imagine a physicist looking through a magnifying glass at the wiggles in her data, and deciding to find a causal explanation for each individual spike. But that is precisely the game in market analysis.

The standard approach to this aspect of economic theory is as revealing as it is disturbing. Economic noise is a ‘bad thing’, because it seems to undermine the notion that economists understand the economy. And so it is banished. Noise, they say, has nothing to do with the operation of the market. In the ‘neoclassical’ theory that dominates all of academic economics today, markets are instantaneously in equilibrium, so that they display optimal efficiency and all goods find their way effectively to those who want them. So the marketplace would run as smoothly as the Japanese rail network – if only it did not keep getting disrupted by external ‘shocks’.

These shocks come from factors such as technological change – an idea that stems back to Marx – which force the market constantly to readjust itself. The very language of this process, in which economists talk of ‘corrections’ to the market, betrays their insistence that none of this is the fault of the market itself, which is simply doing its best to accommodate the nasty outside world. “Nothing more useless than listening to a newscaster tell us how the market just made a little ‘correction’”, says Joe McCauley of the University of Houston, who believes that ideas from physics can help explain what is really going on in economics. (I have a forthcoming article in Nature on this topic.)

“The economists incorrectly try to imagine that the system is in equilibrium, and then gets a shock into a new equilibrium state”, says McCauley. “But real economic systems are never in equilibrium. There is, to date, no empirical evidence whatsoever for either statistical or dynamic equilibrium in any real market. In their way of thinking, they have treat one, single point in a time series as ‘equilibrium’, and that is total nonsense. It’s completely unscientific.”

Unscientific perhaps – but politically useful. While the idea of perfect market efficiency rules, it is easy to argue that any tampering with market mechanisms – any regulation of free markets – is harmful to the economy and therefore pernicious. And that is the suggestion that has dominated the climate of US economic policy since the Reagan administration, as Paul Krugman points out in his excellent book Peddling Prosperity (W. W. Norton, 1994). Of course, the existence of an unregulated economy suits big business just fine, even if there is no objective reason at all to believe that it is the optimal solution to anything.

A little history makes it clear how economics got into this state. Adam Smith’s idea of a ‘hidden hand’ that matches supply to demand was a truly fundamental insight, elucidating how a market can be self-regulating. It chimed with the Newtonian tenor of Smith’s times, and led to the notion (which Smith himself never expressed as such) of ‘market forces’. Then at the end of the nineteenth century, the architects of what became the standard neoclassical economic theory of today – men like Francis Edgeworth and Alfred Marshall – were highly influenced by the ideas on thermodynamic equilibrium developed by scientists such as James Clerk Maxwell and Ludwig Boltzmann. Concepts of equilibration and balancing of forces, imported from physics, are to blame for the misleading notions at the heart of modern economics.

Ah, but there was still no escaping those fluctuations – certainly not in the 1930s, when they led to the biggest ever market crash and the Great Depression that followed. But at that time the scientific concept of noise, pioneered by Einstein and Marian Smoluchowski, was in its infancy. Much better understood was the concept of oscillations – of periodic movements. And so economists decided that, because sometimes prices rose and sometimes they fell, they must be ‘cyclic’, which is to say, periodic. This gave rise to the most extraordinary proliferation of theories about economic ‘cycles’ – there were Kitchin cycles, Juglar cycles, Kuznets and Kondriateff cycles… The Great Crash was then simply an unfortunate piling up of troughs in different cycles. Some were more sophisticated: in the 1930s US accountant Ralph Elliott proposed that markets wax and wane in waves based on the Fibonacci series, a piece of numerology that even today leaves analysts making forecasts based on the Golden Mean, as though they have been spending rather too long with The Da Vinci Code.

As a result, we are now seemingly stuck with the concept of the ‘business cycle’, a piece of lore so deeply embedded in economics that it is almost impossible to discuss market behaviour without it. So let’s get it straight: there is no business cycle in any meaningful sense – nothing cyclic about ‘bull’ and ‘bear’ markets. Sometimes traders decide to buy; sometimes they sell. Sometimes prices rise; sometimes they fall. Things go up and down. That is not a ‘cycle’. “There is no empirical evidence for cycles or periodicity”, says McCauley. “And there is also no evidence for periodic motion plus noise. There is only noise with [long-term] drift. So the better phrase would be 'business fluctuations'.” But ‘fluctuations’ sounds little scary – as though there might be something unpredictable about it.

And anyway, there is a neoclassical explanation for business cycles, so no need to worry. It was concocted by Finn Kydland and Edward Prescott and they won the 2004 Nobel prize for it. Surely that counts for something? Not in McCauley’s view: “The K&P model is totally misleading and, like many other examples in economics, should have been awarded a booby prize, certainly not a Noble Prize.” This model gives the favoured explanation: those pesky fluctuations are ‘exogenous’, imposed from outside. The market is perfect; it’s just that the world gets in the way.

You’d think we must have a good understanding of business cycles, because some economic policies depend on it. One of the five criteria for the UK entering into the European monetary union – for adopting the euro – is that UK business cycles should converge with those of countries that now have the euro. This, I assumed, must be predicated on some model of what causes the business cycle – surely we wouldn’t make a criterion like this if we didn’t understand the phenomenon that underpinned it? So I wrote to the UK Treasury two years ago to ask what model they used to understand the business cycle. I’m still waiting for an answer.

Economics as a whole is not a worthless discipline – indeed, some of the recent Nobels (look at 1998, 2002 and 2005, for example) have been awarded for genuinely exciting work. But McCauley is not alone in thinking that its core is rotten. Steve Keen’s Debunking Economics (Pluto Press, 2001) demonstrates why, even if you accept the absurdly simplistic first principles of neoclassical microeconomic theory, the way the theory unfolds is internally inconsistent. Economists interested in using agent-based modelling to explore realistic agent behaviour and non-equilibrium markets have become so fed up with their exclusion from the mainstream that they are starting their own journal, the Journal of Economic Interaction and Coordination. Such models have made it very clear that Tom Stevenson’s hunch that market fluctuations come from herding behaviour – that the noise is intrinsic to the economy, not an external disturbance – is right on the mark. So much so, in fact, that it is odd and disheartening to see commentators still needing to disclose this as though it was some kind of revelation.

The past few weeks have been a time of turmoil for economic markets. They have been lurching and plunging all over the place, prompting a rash of ‘explanations’ from market analysts. ‘The noise in the markets is the sound of everyone and his dog coming up with post-facto justifications for the apparently random movements in assets from gold to equities, copper and the dollar’, says Tom Stevenson in his 23 May Investment Column in the Daily Telegraph. ‘And while the latest rationalisation sounds plausible enough when you’ve just heard it, so too does the next believable but contradictory explanation.’

Full marks to Stevenson for his honesty. But then he goes on to suggest that ‘what drives financial markets is not the ebb and flow of investment ratios and economic statistics but the fickle and often lemming-like workings of investors’ minds… In other words, asset prices are falling simply because they’ve been rising sharply and investors have become more nervous.’ Now, I am not a highly paid investments analyst, but I can’t help feeling that Stevenson is hardly dropping a bombshell here. Weather forecasters would be unlikely to gain many plaudits by telling us that ‘tomorrow it will rain because some water vapour has evaporated and then condensed up in the sky.’

I suppose we should be grateful that Stevenson is at least not recycling one of the many voodoo tales to which he alludes. But his comments are a symptom of the extraordinary state of economic punditry today – which in itself is a reflection of the bizarre state of economic theory itself. When Thomas Carlyle called it the ‘dismal science’, he was (contrary to popular belief) making a judgement not about its quality but about its seemingly gloomy message. But ‘dismal’ hardly does the situation justice today – there is no other ‘science’, hard or soft, that has got itself into a comparably strange and parlous state.

Everyone knows that market statistics, such as commodity values, fluctuate wildly over a wide range of timescales (while, in the long term, showing generally steady growth). There is nothing particularly remarkable or surprising about that: clearly, the economy is a complex system (one of the most complex we know of, in fact), and such systems, whether they be earthquakes or landslides or biological populations or electronic circuits, show pronounced and seemingly random noise. What is unusual about economic noise, however , is that an awful lot of money rides on it.

That is why, rather than regard it indeed as noise, economists and market analysts are desperate to ‘explain’ it. Imagine a physicist looking through a magnifying glass at the wiggles in her data, and deciding to find a causal explanation for each individual spike. But that is precisely the game in market analysis.

The standard approach to this aspect of economic theory is as revealing as it is disturbing. Economic noise is a ‘bad thing’, because it seems to undermine the notion that economists understand the economy. And so it is banished. Noise, they say, has nothing to do with the operation of the market. In the ‘neoclassical’ theory that dominates all of academic economics today, markets are instantaneously in equilibrium, so that they display optimal efficiency and all goods find their way effectively to those who want them. So the marketplace would run as smoothly as the Japanese rail network – if only it did not keep getting disrupted by external ‘shocks’.

These shocks come from factors such as technological change – an idea that stems back to Marx – which force the market constantly to readjust itself. The very language of this process, in which economists talk of ‘corrections’ to the market, betrays their insistence that none of this is the fault of the market itself, which is simply doing its best to accommodate the nasty outside world. “Nothing more useless than listening to a newscaster tell us how the market just made a little ‘correction’”, says Joe McCauley of the University of Houston, who believes that ideas from physics can help explain what is really going on in economics. (I have a forthcoming article in Nature on this topic.)

“The economists incorrectly try to imagine that the system is in equilibrium, and then gets a shock into a new equilibrium state”, says McCauley. “But real economic systems are never in equilibrium. There is, to date, no empirical evidence whatsoever for either statistical or dynamic equilibrium in any real market. In their way of thinking, they have treat one, single point in a time series as ‘equilibrium’, and that is total nonsense. It’s completely unscientific.”

Unscientific perhaps – but politically useful. While the idea of perfect market efficiency rules, it is easy to argue that any tampering with market mechanisms – any regulation of free markets – is harmful to the economy and therefore pernicious. And that is the suggestion that has dominated the climate of US economic policy since the Reagan administration, as Paul Krugman points out in his excellent book Peddling Prosperity (W. W. Norton, 1994). Of course, the existence of an unregulated economy suits big business just fine, even if there is no objective reason at all to believe that it is the optimal solution to anything.

A little history makes it clear how economics got into this state. Adam Smith’s idea of a ‘hidden hand’ that matches supply to demand was a truly fundamental insight, elucidating how a market can be self-regulating. It chimed with the Newtonian tenor of Smith’s times, and led to the notion (which Smith himself never expressed as such) of ‘market forces’. Then at the end of the nineteenth century, the architects of what became the standard neoclassical economic theory of today – men like Francis Edgeworth and Alfred Marshall – were highly influenced by the ideas on thermodynamic equilibrium developed by scientists such as James Clerk Maxwell and Ludwig Boltzmann. Concepts of equilibration and balancing of forces, imported from physics, are to blame for the misleading notions at the heart of modern economics.

Ah, but there was still no escaping those fluctuations – certainly not in the 1930s, when they led to the biggest ever market crash and the Great Depression that followed. But at that time the scientific concept of noise, pioneered by Einstein and Marian Smoluchowski, was in its infancy. Much better understood was the concept of oscillations – of periodic movements. And so economists decided that, because sometimes prices rose and sometimes they fell, they must be ‘cyclic’, which is to say, periodic. This gave rise to the most extraordinary proliferation of theories about economic ‘cycles’ – there were Kitchin cycles, Juglar cycles, Kuznets and Kondriateff cycles… The Great Crash was then simply an unfortunate piling up of troughs in different cycles. Some were more sophisticated: in the 1930s US accountant Ralph Elliott proposed that markets wax and wane in waves based on the Fibonacci series, a piece of numerology that even today leaves analysts making forecasts based on the Golden Mean, as though they have been spending rather too long with The Da Vinci Code.

As a result, we are now seemingly stuck with the concept of the ‘business cycle’, a piece of lore so deeply embedded in economics that it is almost impossible to discuss market behaviour without it. So let’s get it straight: there is no business cycle in any meaningful sense – nothing cyclic about ‘bull’ and ‘bear’ markets. Sometimes traders decide to buy; sometimes they sell. Sometimes prices rise; sometimes they fall. Things go up and down. That is not a ‘cycle’. “There is no empirical evidence for cycles or periodicity”, says McCauley. “And there is also no evidence for periodic motion plus noise. There is only noise with [long-term] drift. So the better phrase would be 'business fluctuations'.” But ‘fluctuations’ sounds little scary – as though there might be something unpredictable about it.

And anyway, there is a neoclassical explanation for business cycles, so no need to worry. It was concocted by Finn Kydland and Edward Prescott and they won the 2004 Nobel prize for it. Surely that counts for something? Not in McCauley’s view: “The K&P model is totally misleading and, like many other examples in economics, should have been awarded a booby prize, certainly not a Noble Prize.” This model gives the favoured explanation: those pesky fluctuations are ‘exogenous’, imposed from outside. The market is perfect; it’s just that the world gets in the way.

You’d think we must have a good understanding of business cycles, because some economic policies depend on it. One of the five criteria for the UK entering into the European monetary union – for adopting the euro – is that UK business cycles should converge with those of countries that now have the euro. This, I assumed, must be predicated on some model of what causes the business cycle – surely we wouldn’t make a criterion like this if we didn’t understand the phenomenon that underpinned it? So I wrote to the UK Treasury two years ago to ask what model they used to understand the business cycle. I’m still waiting for an answer.

Economics as a whole is not a worthless discipline – indeed, some of the recent Nobels (look at 1998, 2002 and 2005, for example) have been awarded for genuinely exciting work. But McCauley is not alone in thinking that its core is rotten. Steve Keen’s Debunking Economics (Pluto Press, 2001) demonstrates why, even if you accept the absurdly simplistic first principles of neoclassical microeconomic theory, the way the theory unfolds is internally inconsistent. Economists interested in using agent-based modelling to explore realistic agent behaviour and non-equilibrium markets have become so fed up with their exclusion from the mainstream that they are starting their own journal, the Journal of Economic Interaction and Coordination. Such models have made it very clear that Tom Stevenson’s hunch that market fluctuations come from herding behaviour – that the noise is intrinsic to the economy, not an external disturbance – is right on the mark. So much so, in fact, that it is odd and disheartening to see commentators still needing to disclose this as though it was some kind of revelation.

Monday, May 22, 2006

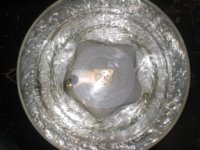

Weird things in a bucket of water

That's all you need to punch a geometric hole in water. Take a look. When the bucket is rotated so fast that the depression in the central vortex reaches the bottom, it can develop a cross-section shaped like triangles, squares, pentagons and hexagons. My story about it is here.

Harry Swinney at Texas says that this isn't unexpected – a symmetry-breaking wavy instability is bound to set in eventually as the rotation speed rises. Harry has seen related things in rotating disk-shaped tanks (without a free water surface) created to model the flows on Jupiter (see Nature 331, 689; 1988).

The intriguing question is whether this has anything to do with the polygonal flows and vortices seen in planetary atmospheres – both in hurricanes on Earth and in the north polar circulation of Saturn. It's not clear that it does – Swinney points out that the Rossby number (the dimensionless number that dictates the behaviour in the planetary flows) is very different in the lab experiments. But he doesn't rule out the possibility that the phenomenon could happen in smaller-scale atmospheric features, such as tornadoes. Tomas Bohr tells me anecdotally that he's heard of similar polygonal structures having been produced in the 'toy tornadoes' made by Californian artist Ned Kahn – whose work, frankly, you should check out in any case.

Friday, May 19, 2006

The return of Dr Hooke

Yes, he seemed pleased to be back at the Royal Society after 303 years – though disconcerted at the absence of his portrait, especially while those of his enemies Oldenburg and Newton were prominently displayed. Hooke had returned to present his long-lost notes to the President, Sir Martin Rees. These, Hooke’s personal transcriptions of the minutes of the Royal Society between 1661 and 1682, were found in a cupboard in Hampshire and due to be auctioned at Bonhams until the Royal Society managed to raise the £1 million or so needed to make a last-minute deal. The official hand-over happened on 17th May, and the chaps at the Royal Society decided it would be fun to have Hooke himself do the honours. But who should don the wig? Well, I hear that bloke Philip Ball has done a bit of acting…

I didn’t take much persuading, I have to admit. Partly this is because I can’t help feeling some affinity to Hooke – not because I am short with a hunched back, a “man of strange unsociable temper”, and constantly getting into priority disputes, but because I too was born on the Isle of Wight. (There is scandalously little recognition of that fact on the Island – I suspect that most residents do not realise that is why Hook Hill in Freshwater is so named.) But it was also because I could not pass up the opportunity to get my hands on his papers. I didn’t, however, reckon on getting half an hour alone with them. I had a good look through, but I’m afraid I’m not able to reveal any exclusive secrets about what they contain – not because I am sworn to silence, but because, what with Hooke’s incredibly tiny scrawl and my struggles to keep my stockings from falling down without garters, I didn’t get much chance to study them in detail. All the same, it was thrilling to see pages headed “21 November 1673: Chris. Wren took the chair.” And I enjoyed a comment that the Royal Society had received a letter from Antoni von Leeuwenhoek but deferred reading it until the next meeting because “it is in Low Dutch and very long.”

The anonymous benefactors truly deserve our gratitude for keeping these pages where they belong – at the Royal Society. There will be a video of the proceedings. on the RS’s web pages soon.

Tuesday, May 16, 2006

Are chemists designers?

Not according to a provocative article by Martin Jansen and Christian Schön in Angewandte Chemie. They argue that 'design' in the strict sense doesn't come into the process of making molecules, because the freedom of chemists is so severely constrained by the laws of physics and chemistry. Whereas a true designer shapes and combines materials plastically to make forms and structures that would never have otherwise existed, chemists are simply exploring predefined minima in the energy landscape that determines the stable configurations of atoms. Admittedly, they say, this is a big space (the notion of 'chemical space' has recently become a hot topic in drug discovery) – but nonetheless all possible molecules are in principle predetermined, and their structures cannot be varied arbitrarily. This discreteness and topological fixity of chemical space means (they say) that "the possibility for 'design' is available only if the desired function can be realized by a structure with essentially macroscopic dimensions." You can design a teapot, but not a molecule.

Chemists won't like this, because they (rightly) pride themselves in their creativity and often liken their crafting of molecules to a kind of art form. Having spoken in two books and many articles about molecular and materials design, I might be expected to share that response. And in fact I do, though I think that Jansen and Schön's article is extremely and usefully stimulating and makes some very pertinent points. I suppose that the most immediate and, I think, telling objection to their thesis is that the permutations of chemical space are so vast that it really doesn't matter much that they are preordained and discrete. One estimate gives the number of small organic molecules alone as 10^60, which is more than we could hope to explore (at today's rate of discovery/synthesis) in a billion years.

Given this immense choice, chemists must necessarily use their knowledge, intuition and personal preferences to guide them towards molecules worth making – whether that is just for the fun of it or because the products will fulfil a specific function. Designers do the same – they generally look for function and try to achieve it with a certain degree of elegance. The art of making a functional molecule is generally not a matter of looking for a complete molecular structure that does the job; it usually employs a kind of modular reasoning, considering how each different part of the structure must be shaped to play its respective role. We need a binding group here, a spacer group there, a hydrophilic substituent for solubility, and so on. That seems a lot like design to me.

Moreover, while it's true that one can't in general alter the length or angle of a bond arbitrarily, one can certainly establish principles that enable a more or less systematic variation of such quantities. For example, Roald Hoffmann and his colleagues have recently considered how one might compress carbon-carbon bonds in cage structures, and have demonstrated (in theory) an ability to do this over a wide range of lengths (see the article here). The intellectual process here surely resembles that of 'design' rather than merely 'searching' for stable states.

Jansen and Schön imply that true design must include an aesthetic element. That is certainly a dimension open to chemists, who regularly make molecules simply because they consider them beautiful. Now, this is a slippery concept – Joachim Schummer has pointed out that chemists have an archaic notion of beauty, defined along Platonic lines and thus based on issues of symmetry and regularity. (In fact, Platonists did not regard symmetry as aesthetically beautiful – rather, they felt that order and symmetry defined what beauty meant.) I have sometimes been frustrated myself that chemists' view of what 'art' entails so often falls back on this equating of 'artistic' with 'beautiful' and 'symmetric', thus isolating themselves from any real engagement with contemporary ideas about art. Nonetheless, chemists clearly do possess a kind of aesthetic in making molecules – and they make real choices accordingly, which can hardly be stripped of any sense of design just because they are discrete.

Jansen and Schön suggest that it would be unwise to regard this as merely a semantic matter, allowing chemists their own definition of 'design' even if technically it is not the same as what designers do. I'd agree with that in principle – it does matter what words mean, and all too often scientists co-opt and then distort them for their own purposes (and are obviously not alone in that). But I don't see that the meaning of 'design' actually has such rigid boundaries that it will be deformed beyond recognition if we apply it to the business of making molecules. Keep designing, chemists.

Not according to a provocative article by Martin Jansen and Christian Schön in Angewandte Chemie. They argue that 'design' in the strict sense doesn't come into the process of making molecules, because the freedom of chemists is so severely constrained by the laws of physics and chemistry. Whereas a true designer shapes and combines materials plastically to make forms and structures that would never have otherwise existed, chemists are simply exploring predefined minima in the energy landscape that determines the stable configurations of atoms. Admittedly, they say, this is a big space (the notion of 'chemical space' has recently become a hot topic in drug discovery) – but nonetheless all possible molecules are in principle predetermined, and their structures cannot be varied arbitrarily. This discreteness and topological fixity of chemical space means (they say) that "the possibility for 'design' is available only if the desired function can be realized by a structure with essentially macroscopic dimensions." You can design a teapot, but not a molecule.

Chemists won't like this, because they (rightly) pride themselves in their creativity and often liken their crafting of molecules to a kind of art form. Having spoken in two books and many articles about molecular and materials design, I might be expected to share that response. And in fact I do, though I think that Jansen and Schön's article is extremely and usefully stimulating and makes some very pertinent points. I suppose that the most immediate and, I think, telling objection to their thesis is that the permutations of chemical space are so vast that it really doesn't matter much that they are preordained and discrete. One estimate gives the number of small organic molecules alone as 10^60, which is more than we could hope to explore (at today's rate of discovery/synthesis) in a billion years.

Given this immense choice, chemists must necessarily use their knowledge, intuition and personal preferences to guide them towards molecules worth making – whether that is just for the fun of it or because the products will fulfil a specific function. Designers do the same – they generally look for function and try to achieve it with a certain degree of elegance. The art of making a functional molecule is generally not a matter of looking for a complete molecular structure that does the job; it usually employs a kind of modular reasoning, considering how each different part of the structure must be shaped to play its respective role. We need a binding group here, a spacer group there, a hydrophilic substituent for solubility, and so on. That seems a lot like design to me.

Moreover, while it's true that one can't in general alter the length or angle of a bond arbitrarily, one can certainly establish principles that enable a more or less systematic variation of such quantities. For example, Roald Hoffmann and his colleagues have recently considered how one might compress carbon-carbon bonds in cage structures, and have demonstrated (in theory) an ability to do this over a wide range of lengths (see the article here). The intellectual process here surely resembles that of 'design' rather than merely 'searching' for stable states.

Jansen and Schön imply that true design must include an aesthetic element. That is certainly a dimension open to chemists, who regularly make molecules simply because they consider them beautiful. Now, this is a slippery concept – Joachim Schummer has pointed out that chemists have an archaic notion of beauty, defined along Platonic lines and thus based on issues of symmetry and regularity. (In fact, Platonists did not regard symmetry as aesthetically beautiful – rather, they felt that order and symmetry defined what beauty meant.) I have sometimes been frustrated myself that chemists' view of what 'art' entails so often falls back on this equating of 'artistic' with 'beautiful' and 'symmetric', thus isolating themselves from any real engagement with contemporary ideas about art. Nonetheless, chemists clearly do possess a kind of aesthetic in making molecules – and they make real choices accordingly, which can hardly be stripped of any sense of design just because they are discrete.

Jansen and Schön suggest that it would be unwise to regard this as merely a semantic matter, allowing chemists their own definition of 'design' even if technically it is not the same as what designers do. I'd agree with that in principle – it does matter what words mean, and all too often scientists co-opt and then distort them for their own purposes (and are obviously not alone in that). But I don't see that the meaning of 'design' actually has such rigid boundaries that it will be deformed beyond recognition if we apply it to the business of making molecules. Keep designing, chemists.

Wednesday, May 10, 2006

The Big Bounce

The discovery in 1996 that the universe is not just expanding but accelerating was inconvenient because it meant that cosmologists could no longer ignore the question of the cosmological constant. The acceleration is said to be caused by ‘dark energy’ that makes empty space repulsive, and the most obvious candidate for that is the vacuum energy, due to the constant creation and annihilation of particles and their antiparticles. The problem is that quantum theory implies that this energy should be enormous – too great, in fact, to allow stars and galaxies to form at all. While we could assume that the cosmological constant was zero, it was reasonable to imagine that this energy was somehow cancelled out perfectly by another aspect of physical law, even if we didn’t know what it was. But now it seems that such ‘cancellation’ is not perfect, but is absurdly fine-tuned to within a whisker of zero: to one part in 10^120, in fact. How do we explain that?

A new proposal invokes a cyclic universe. I asked one of its authors, Paul Steinhardt, about the idea, and he made some comments which didn’t find their way into my article but which I think are illuminating. So here they are. Thank you, Paul.

PB: How is a Big Crunch driven, in a universe that has been expanding for a trillion years or so with a positive cosmological constant, i.e. a virtually empty space? I gather this comes from the brane model, where the cyclicity is caused by an attractive potential between the branes that operates regardless of the matter density of the universe - is that right?

PS: Yes, you have it exactly right. The cycles are governed by the spring-like force between branes that causes them to crash into one another at regular intervals.

PB: What is your main objection to explaining the fine-tuning dilemma using the anthropic principle? One might wonder whether it is more extravagant to posit an infinite number of universes, with different fundamental constants, or a (quasi?)infinite series of oscillations of a single universe.

PS: I have many objections to the anthropic principle. Let me name just three:

a) It relies on strong, untestable* assumptions about what the universe is like beyond the horizon, where we are prevented by the laws of physics from performing any empirical tests.

b) In current versions, it relies on the idea that everything we see is a rare/unlikely/bizarre possibility. Most of the universe is completely different - it will never be habitable; it will never have physical properties similar to ours; and so on. So, instead of looking for a fundamental theory that predicts what we observe as being LIKELY, we are asked to accept a fundamental theory that predicts what we see is UNLIKELY. This is rather significant deviation from the kind of scientific methodology that has been so successful for the last 300 years.

*I would like to emphasize that I said "untestable assumptions". Many proponents of the anthropic model like to argue that they make predictions and that those predictions can be tested. But, it is important to appreciate that this is not the standard that must be reached for proper science. You must be able to test the assumptions as well. For example, the Food and Drug Administration (thankfully) follow proper scientific practice in this sense. If I give you a pill and "predict" it will cure your cold; and then you take the pill and your cold is cured; the FDA is not about to give its imprimitur to your pill. You must show that your pill really has the active ingredient that CAUSED the cure. Here, that means proving that there is a multiverse, that the cosmological constant really does vary outside our horizon, that it follows the kind of probability distribution that is postulated, etc. – all things that cannot ever be proved because they entail phenomena that lie outside our allowed realm of observation.

PB: Could you explain how your model of cyclicity and decaying vacuum energy leads to an observable prediction concerning axions - and what this prediction is? (What are axions themselves, for example?)

PS: This may be much for your article, but....

Axions are fields that many particle physicists believe are necessary to explain a well-known difficult of the "standard model" of particles called "the strong CP problem." For cosmological purposes, these are examples of very light, very weakly interacting fields that very slowly relax to the small value required to solve the strong CP problem. In string theory, there are many analogous light fields; they control the size and shape of extra dimensions; they are also light and slowly relax.

A potential problem with inflation is that inflation excites all light fields. It excites the field responsible for inflation itself, which is what give rise to the temperature variations seen in the cosmic microwave background and are responsible for galaxy formation. So this is good.

But what is bad, potentially, is that they also excite the axion and all light degrees of freedom. This acts like a new form of energy in the universe that can overtake the radiation and change the expansion history of the universe in a way that is cosmologically disastrous. So, you have to find some way to quell these fields before they do their damage. There is a vast literature on complex mechanisms for doing this. Even so, some have become so desperate as to turn to the anthropic principle once again (maybe we live in the lucky zone where these fields aren't excited).

In the cyclic models, these fields would only be excited when the cosmological constant was very large, which is a long, long, LONG time ago. There have been so many cycles (and these do not disturb the axions or the other fields) that there has been plenty of time to relax away to negligible values.

In other words, the same concept being used to solve the cosmological constant problem – namely, more time – is also automatically ensuring that axions and other light fields are not problematic.

The discovery in 1996 that the universe is not just expanding but accelerating was inconvenient because it meant that cosmologists could no longer ignore the question of the cosmological constant. The acceleration is said to be caused by ‘dark energy’ that makes empty space repulsive, and the most obvious candidate for that is the vacuum energy, due to the constant creation and annihilation of particles and their antiparticles. The problem is that quantum theory implies that this energy should be enormous – too great, in fact, to allow stars and galaxies to form at all. While we could assume that the cosmological constant was zero, it was reasonable to imagine that this energy was somehow cancelled out perfectly by another aspect of physical law, even if we didn’t know what it was. But now it seems that such ‘cancellation’ is not perfect, but is absurdly fine-tuned to within a whisker of zero: to one part in 10^120, in fact. How do we explain that?

A new proposal invokes a cyclic universe. I asked one of its authors, Paul Steinhardt, about the idea, and he made some comments which didn’t find their way into my article but which I think are illuminating. So here they are. Thank you, Paul.

PB: How is a Big Crunch driven, in a universe that has been expanding for a trillion years or so with a positive cosmological constant, i.e. a virtually empty space? I gather this comes from the brane model, where the cyclicity is caused by an attractive potential between the branes that operates regardless of the matter density of the universe - is that right?

PS: Yes, you have it exactly right. The cycles are governed by the spring-like force between branes that causes them to crash into one another at regular intervals.

PB: What is your main objection to explaining the fine-tuning dilemma using the anthropic principle? One might wonder whether it is more extravagant to posit an infinite number of universes, with different fundamental constants, or a (quasi?)infinite series of oscillations of a single universe.

PS: I have many objections to the anthropic principle. Let me name just three:

a) It relies on strong, untestable* assumptions about what the universe is like beyond the horizon, where we are prevented by the laws of physics from performing any empirical tests.

b) In current versions, it relies on the idea that everything we see is a rare/unlikely/bizarre possibility. Most of the universe is completely different - it will never be habitable; it will never have physical properties similar to ours; and so on. So, instead of looking for a fundamental theory that predicts what we observe as being LIKELY, we are asked to accept a fundamental theory that predicts what we see is UNLIKELY. This is rather significant deviation from the kind of scientific methodology that has been so successful for the last 300 years.

*I would like to emphasize that I said "untestable assumptions". Many proponents of the anthropic model like to argue that they make predictions and that those predictions can be tested. But, it is important to appreciate that this is not the standard that must be reached for proper science. You must be able to test the assumptions as well. For example, the Food and Drug Administration (thankfully) follow proper scientific practice in this sense. If I give you a pill and "predict" it will cure your cold; and then you take the pill and your cold is cured; the FDA is not about to give its imprimitur to your pill. You must show that your pill really has the active ingredient that CAUSED the cure. Here, that means proving that there is a multiverse, that the cosmological constant really does vary outside our horizon, that it follows the kind of probability distribution that is postulated, etc. – all things that cannot ever be proved because they entail phenomena that lie outside our allowed realm of observation.

PB: Could you explain how your model of cyclicity and decaying vacuum energy leads to an observable prediction concerning axions - and what this prediction is? (What are axions themselves, for example?)

PS: This may be much for your article, but....

Axions are fields that many particle physicists believe are necessary to explain a well-known difficult of the "standard model" of particles called "the strong CP problem." For cosmological purposes, these are examples of very light, very weakly interacting fields that very slowly relax to the small value required to solve the strong CP problem. In string theory, there are many analogous light fields; they control the size and shape of extra dimensions; they are also light and slowly relax.

A potential problem with inflation is that inflation excites all light fields. It excites the field responsible for inflation itself, which is what give rise to the temperature variations seen in the cosmic microwave background and are responsible for galaxy formation. So this is good.

But what is bad, potentially, is that they also excite the axion and all light degrees of freedom. This acts like a new form of energy in the universe that can overtake the radiation and change the expansion history of the universe in a way that is cosmologically disastrous. So, you have to find some way to quell these fields before they do their damage. There is a vast literature on complex mechanisms for doing this. Even so, some have become so desperate as to turn to the anthropic principle once again (maybe we live in the lucky zone where these fields aren't excited).

In the cyclic models, these fields would only be excited when the cosmological constant was very large, which is a long, long, LONG time ago. There have been so many cycles (and these do not disturb the axions or the other fields) that there has been plenty of time to relax away to negligible values.

In other words, the same concept being used to solve the cosmological constant problem – namely, more time – is also automatically ensuring that axions and other light fields are not problematic.

Friday, May 05, 2006

Myths in the making

Or the unmaking, perhaps. It was such a lovely story: a mysterious but very real force of attraction between objects caused by the peculiar tendency of empty space to spawn short-lived quantum particles has a maritime analogue in which ships are attracted because of the suppression of long-wavelength waves between them. That’s what was claimed ten years ago, and it became such a popular component of physics popularization that, when he failed to mention it in his book ‘Zero’ (Viking, 2000; which explored this aspect of the quantum physics of emptiness), Charles Seife was taken to task. But it seems that no such analogy really exists – or at least, that there is no evidence for it. The myth is unpicked here.

This vacuum force is called the Casimir effect, and was identified by Dutch physicist Hendrik Casimir in 1948 – though, being so weak and operating at such short distances, it wasn’t until the late 1990s that it was measured directly. It provides fertile hunting ground for speculative and sometimes plain cranky ideas about propulsion systems or energy sources that tap into this energy of the vacuum. (And it certainly seems that there is a lot of energy there – or at least, there ought to be, but something seems to cancel it out almost perfectly, which is why our universe can exist in its present form at all. Here’s a new idea for where all this vacuum energy has gone.)

So how did the false story of a naval analogy start? It was suggested in a paper in the American Journal of Physics by Sipko Boersma. Just as two closely spaced plates suppress the quantum fluctuations of the vacuum at wavelengths longer than the spacing between them, so Boersma proposed that two ships side by side suppress sea waves in a heavy swell. By the same token, Boersma suggests that a ship next to a steep cliff or wall is repelled, because the reflection of the ocean waves at the wall (without a phase shift, as occurs for electromagnetic waves) creates a kind of ‘virtual image’ of the ship within the wall, rolling perfectly out of phase – which reverses the sign of the force.

It sounds persuasive. But there doesn’t seem to be any evidence for such a force between real ships. The only real evidence that Boersma offered in his paper came from a nineteenth-century French naval manual by P. C. Caussée, where indeed a ‘certain attractive force’ was said to exist between ships moored close together. But Fabrizio Pinto has unearthed the old book, and he finds that the force was in fact said to operate only in perfectly calm (‘plat calme’, or flat calm) seas, not in wavy conditions. The engraving that Boersma showed from this manual was for a different set of circumstances, in a heavy swell (where the recommendation was simply to keep the ships apart so that their rigging doesn’t become entangled as they roll).

Regarding this discrepancy, Boersma says the following: “Caussée is not very exact. His mariners told him about ‘une certaine force attractive’ in calm weather and he made out of it an attraction on a flat sea… The reference to ‘Flat Calm’ is clearly an editing error; Caussée’s Album is not a scientific document. He should have referred his attraction to the drawing 14 ‘Calm with heavy swell’, or better still to the drawing 15 ‘Flat Calm’ but then modified with a long swell running. Having read my 1996 paper, one sees immediately what Caussée should have written.”

I’m not sure I follow this: it seems to mean not that Caussée made an ‘editing error’ but that he simply didn’t understand what he had been told about the circumstances in which the force operates. That might be so, but it requires that we take a lot on trust, and rewrite Caussée’s manual to suit a different conclusion. If Caussée was mistaken about this, should we trust him at all? And there doesn’t seem to be any other strong, independent evidence of such a force between ships.

But perhaps getting to the root of the confusion isn’t the point. The moral, I guess, is that it’s never a good idea to take such stories on trust – always check the source. Fabrizio says that scientists rarely do this; on the contrary, they embrace such stories as a part of the lore of their subject, and then react indignantly when they are challenged. “Because of the lamentable utter lack of philosophical knowledge background that afflicts many graduating students especially in the United States, sometimes these behaviors are closer to the tantrums of children who have learned too early of possible disturbing truths about Santa Claus”, he says. Well, that’s possible. Certainly, we would do well to place less trust in histories of science written by scientists, some of whom do not seem terribly interested in history as such but are more concerned simply to show how people stopped believing silly things and started believing good things (i.e. what we believe today). This Whiggish approach to history was abandoned by historians over half a century ago – strange that it still persists unchallenged among scientists. The ‘Copernican revolution’ is a favourite of physicists (it’s commonly believed that Copernicus got rid of epicycles, for instance), and popular retellings of the Galileo story are absurdly simplistic. (And while we’re at it, can we put an end to the notion that Giordano Bruno was burned at the stake because he believed in the heliocentric model? Or would that damage scientists’ martyr complex?) It may not matter so much that a popular idea about the Casimir effect seems after all to be groundless; it might be more important that this episode serves as a wake-up call not to be complacent about history.

Or the unmaking, perhaps. It was such a lovely story: a mysterious but very real force of attraction between objects caused by the peculiar tendency of empty space to spawn short-lived quantum particles has a maritime analogue in which ships are attracted because of the suppression of long-wavelength waves between them. That’s what was claimed ten years ago, and it became such a popular component of physics popularization that, when he failed to mention it in his book ‘Zero’ (Viking, 2000; which explored this aspect of the quantum physics of emptiness), Charles Seife was taken to task. But it seems that no such analogy really exists – or at least, that there is no evidence for it. The myth is unpicked here.

This vacuum force is called the Casimir effect, and was identified by Dutch physicist Hendrik Casimir in 1948 – though, being so weak and operating at such short distances, it wasn’t until the late 1990s that it was measured directly. It provides fertile hunting ground for speculative and sometimes plain cranky ideas about propulsion systems or energy sources that tap into this energy of the vacuum. (And it certainly seems that there is a lot of energy there – or at least, there ought to be, but something seems to cancel it out almost perfectly, which is why our universe can exist in its present form at all. Here’s a new idea for where all this vacuum energy has gone.)

So how did the false story of a naval analogy start? It was suggested in a paper in the American Journal of Physics by Sipko Boersma. Just as two closely spaced plates suppress the quantum fluctuations of the vacuum at wavelengths longer than the spacing between them, so Boersma proposed that two ships side by side suppress sea waves in a heavy swell. By the same token, Boersma suggests that a ship next to a steep cliff or wall is repelled, because the reflection of the ocean waves at the wall (without a phase shift, as occurs for electromagnetic waves) creates a kind of ‘virtual image’ of the ship within the wall, rolling perfectly out of phase – which reverses the sign of the force.

It sounds persuasive. But there doesn’t seem to be any evidence for such a force between real ships. The only real evidence that Boersma offered in his paper came from a nineteenth-century French naval manual by P. C. Caussée, where indeed a ‘certain attractive force’ was said to exist between ships moored close together. But Fabrizio Pinto has unearthed the old book, and he finds that the force was in fact said to operate only in perfectly calm (‘plat calme’, or flat calm) seas, not in wavy conditions. The engraving that Boersma showed from this manual was for a different set of circumstances, in a heavy swell (where the recommendation was simply to keep the ships apart so that their rigging doesn’t become entangled as they roll).

Regarding this discrepancy, Boersma says the following: “Caussée is not very exact. His mariners told him about ‘une certaine force attractive’ in calm weather and he made out of it an attraction on a flat sea… The reference to ‘Flat Calm’ is clearly an editing error; Caussée’s Album is not a scientific document. He should have referred his attraction to the drawing 14 ‘Calm with heavy swell’, or better still to the drawing 15 ‘Flat Calm’ but then modified with a long swell running. Having read my 1996 paper, one sees immediately what Caussée should have written.”

I’m not sure I follow this: it seems to mean not that Caussée made an ‘editing error’ but that he simply didn’t understand what he had been told about the circumstances in which the force operates. That might be so, but it requires that we take a lot on trust, and rewrite Caussée’s manual to suit a different conclusion. If Caussée was mistaken about this, should we trust him at all? And there doesn’t seem to be any other strong, independent evidence of such a force between ships.

But perhaps getting to the root of the confusion isn’t the point. The moral, I guess, is that it’s never a good idea to take such stories on trust – always check the source. Fabrizio says that scientists rarely do this; on the contrary, they embrace such stories as a part of the lore of their subject, and then react indignantly when they are challenged. “Because of the lamentable utter lack of philosophical knowledge background that afflicts many graduating students especially in the United States, sometimes these behaviors are closer to the tantrums of children who have learned too early of possible disturbing truths about Santa Claus”, he says. Well, that’s possible. Certainly, we would do well to place less trust in histories of science written by scientists, some of whom do not seem terribly interested in history as such but are more concerned simply to show how people stopped believing silly things and started believing good things (i.e. what we believe today). This Whiggish approach to history was abandoned by historians over half a century ago – strange that it still persists unchallenged among scientists. The ‘Copernican revolution’ is a favourite of physicists (it’s commonly believed that Copernicus got rid of epicycles, for instance), and popular retellings of the Galileo story are absurdly simplistic. (And while we’re at it, can we put an end to the notion that Giordano Bruno was burned at the stake because he believed in the heliocentric model? Or would that damage scientists’ martyr complex?) It may not matter so much that a popular idea about the Casimir effect seems after all to be groundless; it might be more important that this episode serves as a wake-up call not to be complacent about history.

Tuesday, May 02, 2006

Swarms

That's the title of an exhibition at the Fosterart gallery in Shoreditch, London, running until 14 May. The work is by Farah Syed, and there are examples of it here . Farah tells me she is interested in complexity and self-organization: "sudden irregularities brought about by a minute and random event; a swarm reassembling itself after the disturbance in its path." Looks to me like an interesting addition to the works of art that have explored ideas and processes related to complexity, several of which were discussed in Martin Kemp's book Visualizations (Oxford University Press, 2000).

Subscribe to:

Posts (Atom)